Imagine this dreadful scenario: an impromptu office birthday party. It doesn’t matter whose.

You work at a tech company struggling to stay relevant. The business made it through Microsoft NT, Y2K, and The Great Recession. You figure the gig will last.

Suddenly quieting the room, like the unexpected appearance of a 5G hotspot, is a question from your foolhardy cubicle mate: “what is your opinion on artificial intelligence?”

You want to squirm, but you can’t. It would be rude.

The conversation begins to swell.

One coworker’s mental image is an unhealthy mix of distasteful and campy sci-fi fiction.

Another downplays the situation, crediting the same paranoia running our government.

At least one person is thinking about buying a second Amazon Alexa device for the bedroom.

“Hey, Alexa? Play the sound of impending doom. That’ll put me in the mood.”

Technological advancements have allowed the AI debate to grow faster than the actual technology fueling it. At this moment, no one is winning the argument.

A battle line exists, but it’s more of a loose barrier gliding across a spectrum of gray matter than a trench in the sand. Because of this, the debate is more complicated. Throw in the “informed” opinions of famous faces and the debate is far from being settled:

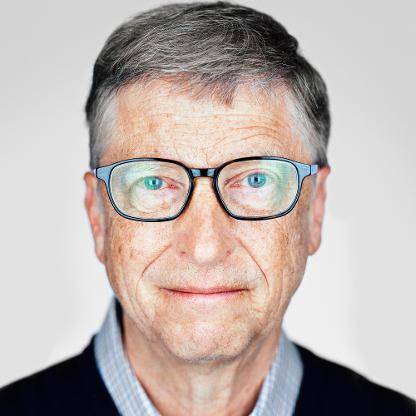

Bill Gates

The founder of Microsoft is a somewhat of a moderate in the tech world. People generally feel neutral about him. That’s counter intuitive considering given his opinion on artificial intelligence has ranged from one end of the spectrum to the another.

“I am in the camp concerned about super intelligence,” Gates wrote in a Reddit AMA from 2015. “First the machines will do a lot of jobs for us and not be super intelligent. That should be positive if we manage it well. A few decades after that though the intelligence is strong enough to be a concern.”

Gates’ tune changed a few years later, when he expressed interest in promoting AI for advancement of economic opportunities. He was adamant about reducing cost of labor without affecting the workforce.

“You’ll be far more efficient using resources, you’ll be far more aware of what’s going on,” Gates said to Fox Business in 2018 .“And it’s very cheap now. Computers can see and hear as well as humans can.”

Microsoft recruited hip-hop artist Common for commercials detailing the possibility of AI in future Microsoft endeavors. It would appear the tech giant is choosing to neglect no options.

Eric Horvitz

Eric Horvitz, manager of Microsoft Research Labs, echoed Bill Gates’ sentiment. He is in charge of AI research Microsoft could use in its services or products.

“…in the end we’ll be able to get incredible benefits from machine intelligence in all realms of life, from science to education to economics to daily life,” Horvitz said to the BBC in 2015.

When asked about the AI takeover, Horvitz isn’t concerned as others. “I fundamentally don’t think that’s going to happen,” Horvitz said.

Elon Musk

One of the most talked about moguls of our time, founder of SpaceX and Tesla Inc. Elon Musk has often discussed the possibilities of future technology. Of course, it is usually in reference to one of his many companies. But when it comes to AI, Musk evangelizes against this evil like a Southern Baptist street preacher trying to stomp out The Devil.

“I think human extinction will probably occur, and technology will likely play a part in this,’ Musk told Vanity Fair in 2017.

“We’re already cyborgs,” Musk said. “Your phone and your computer are extensions of you, but the interface is through finger movements or speech, which are very slow.”

He elaborate on his prediction in an episode of The Joe Rogan Experience podcast.

Yes. THAT podcast.

Joe Rogan Experience-Elon Musk

“It’s less of a worry that it use to be, mostly due to taking more of a fatalistic attitude.”

Musk said. “It’s not necessarily bad. It’s just going to be outside of human control.”

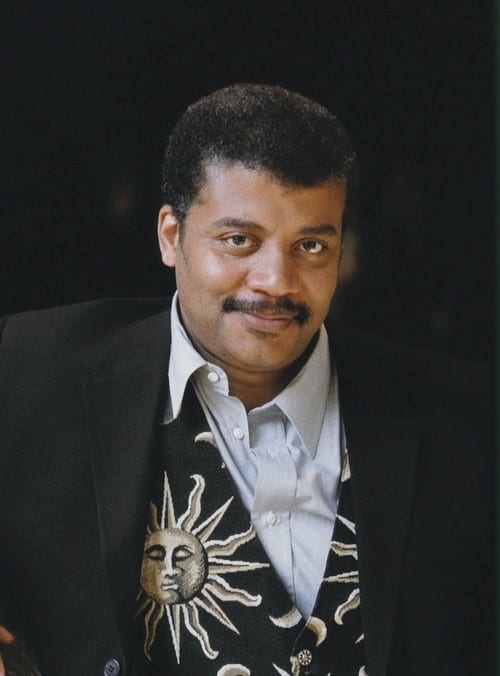

Neil deGrasse Tyson

The Stan Lee of the scientific community, Neil Degrasse Tyson will often give his take on many topics swirling around the technological zeitgeist. Let’s put it this way: most astrophysicists aren’t bothered about voicing their thoughts on AI.

Previously, Tyson took an almost Laissez-faire approach to artificial intelligence.

I’m not as fearsome of AI as others are,” Tyson said on an episode of Rogan’s podcast in 2017.

Joe Rogan Experience-Neil Degrasse Tyson

He furthered this point at the Isaac Asimov Memorial Debate in 2018.

“If it starts getting unruly or out of hand, I just unplug it. Or, since this is ‘Merica, I can just shoot it,” Tyson said.

But in that same engagement, Tyson went on to how an episode of “Making Sense”, a podcast hosted by Sam Harris, shifted his opinion.

“Sam Harris mentioned my comment to him,” Tyson said. “You just leave it in a box. It’s safe there. And what the guy said is ‘it gets out of the box every time.’

It could pose an argument where I am convinced that I need to take it out of the box. Then it controls the world.”

“The Guy” Tyson references is Eliezer Yudkowsky, AI researcher and author. He referenced the hypothetical experiment “AI in a Box”. The idea is that humans won’t build anything connected to the internet, inside of a deadly robot or basically anything outside of our control. Yudkowsky denied that as a viable theory.

“That’s not going to save you from something that is significantly smarter than you.” Yukowsky said. “Humans are not secure software.”

“The thing that makes something smarter than you dangerous, is you cannot see everything it might try.”

Sam Harris Podcast- Eliezer Yudkowsky

Stephen Hawking

Even though he is mostly known for his thoughts on time, or at least the brief history of it, Stephen Hawking was prominent in the AI debate.

“The development of full artificial intelligence could spell the end of the human race,” Hawking told the BBC in 2014. “It would take off on its own and redesign itself in an ever-increasing rate.”

Due to his Amyotrophic Lateral Sclerosis, Hawking needed an advanced speech program to communicate with others. Before his passing, the system he used to speak got an upgrade in an effort to communicate faster.

This system was designed to have machine learning, a form of artificial intelligence designed to make software to adapt within its own limitations and programming. Hawking was asked this because he had been outspoken about artificial intelligence in the past. He went so far as to sign an open letter presented by the Future of Life Institute for the scientific community to be more responsible in AI research and development. The letter was signed by many contemporaries including Horvitz, Musk and Yudkowsky.

This led to a divergence in the AI debate. Often when experts refer to AI in interviews or conversation, they are are referencing machine learning. The reason for this is sometimes just simplification for the casual audience. In the tech world, it is used very specifically. In the common world, the two phrases are used interchangeably.

So which is which? Which one is a concern?

Steve Wozniak

Apple Computers cofounder Steve Wozniak is found by many in the tech community to have a reliable and dependable opinion. He has warned about the temptation that advancements in AI can bring to everyday life:

“If we build these devices to take care of everything for us, eventually they’ll think faster than us and they’ll get rid of the slow humans to run companies more efficiently,” Wozniak said to the Australian Financial Review in 2015. “Computers are going to take over from humans, no question.”

Much like Gates, Wozniak changed his tune on AI. Even though a program may have a higher processing function than the human brain, he said it “doesn’t make up for cognitive ability developed through experience and time.”

To Wozniak, it’s not simple to abruptly replace everyone in society.

“For machines to override human beings, they would have to do every step in society, of digging ores out of quarries and refining materials and building up all the products and everything we have in our lives, and making clothes and food,” Wozniak said at the Nordic Business Forum in 2018.

“That would take hundreds of years to change the infrastructure.”

Alex Garland

Not many filmmakers seem to have a grasp on what is actually happening in the tech world. At best, can they hope to have their art resemble something close to what people know. This isn’t an effort to be accurate so much as relatable.

That’s not the case for Alex Garland, writer and director of the 2015 movie “Ex Machina”. In it, two men struggle morally and physically over a machine named Ava, who is designed to look like a young and attractive woman and have the cognitive ability of any regular human.

“We have laptops and cellphones and tablets, and most of us don’t understand how they work. But the devices seem to understand how we work,” Garland said in an opinion piece he wrote for the New York Times in 2015. “We locate the anxiety in the machines, which translates as anxiety about AI.”

But there is a mistake here,” Garland said. “The machines in question are not strong AI. They are weak. They have no motivation, no intention; they’re neutral.”

Garland pointed out that the fear is not necessarily in machines taking over, but a person misusing such high intelligence. Even Musk stated how the danger is not AI “taking over,” but being used as a weapon by humans on other humans.

As the 21st century unwinds, the debate is still muddled. Seemingly black and white issues are morphing into complicated (and drab) ones. At this point, a clear stance shared by the erudite leaders of today would be considered a luxury.